A conversation with Pathfinder’s digital innovation experts asks the important question: How do we leverage this technology, while protecting the communities we serve from its risks?

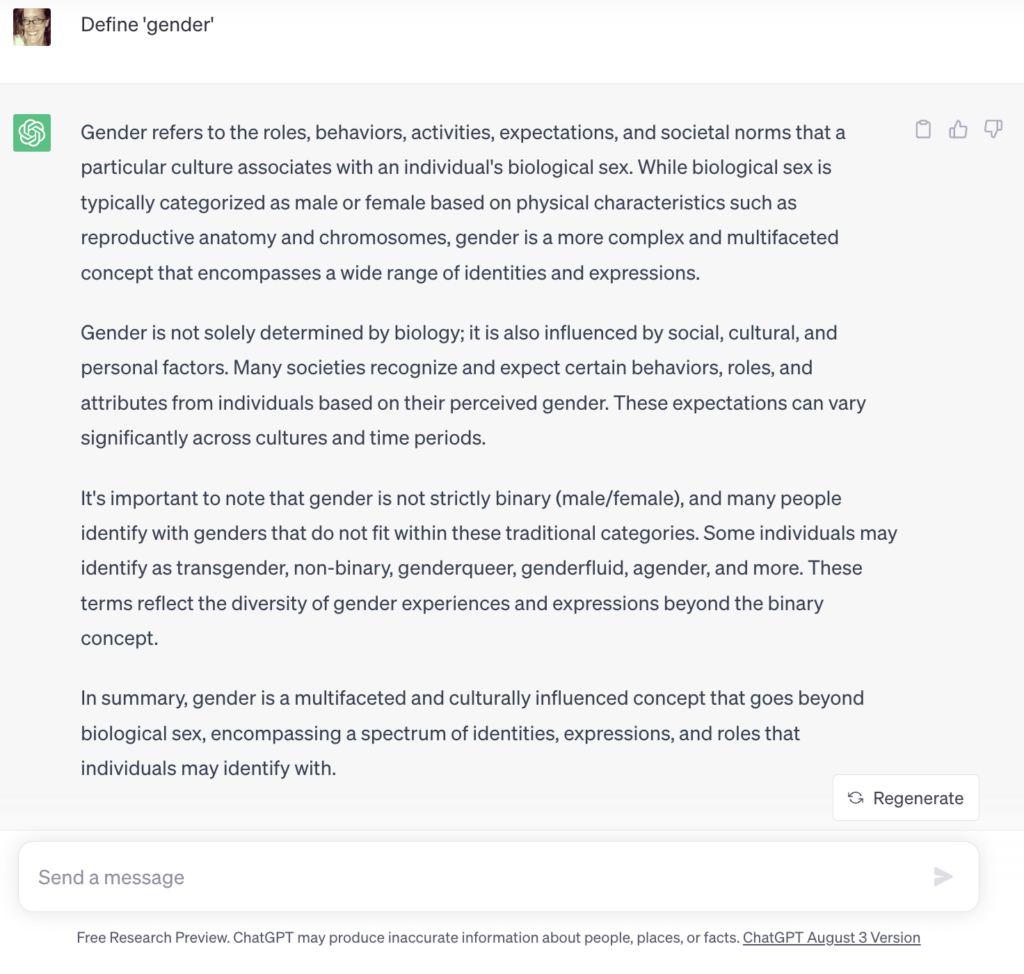

Prompt to ChatGPT: Define “gender.”

In a few seconds ChatGPT throws out a suggestion, one that, for all intents and purposes, has fulfilled the request, and done so with some nuance. It has significant overlaps with Pathfinder’s own definition of gender. But this simple prompt also raises numerous questions related to ethics, risks, and potential for organizations like Pathfinder to leverage Artificial Intelligence (AI), including large-language learning (LLM) models like ChatGPT, for good.

A Commitment to the Ethical Use of AI

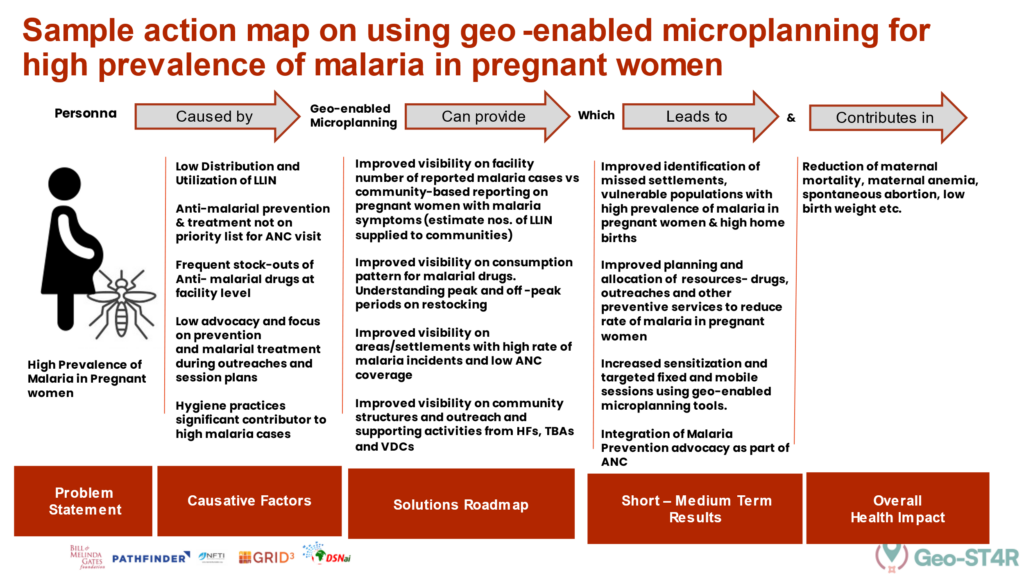

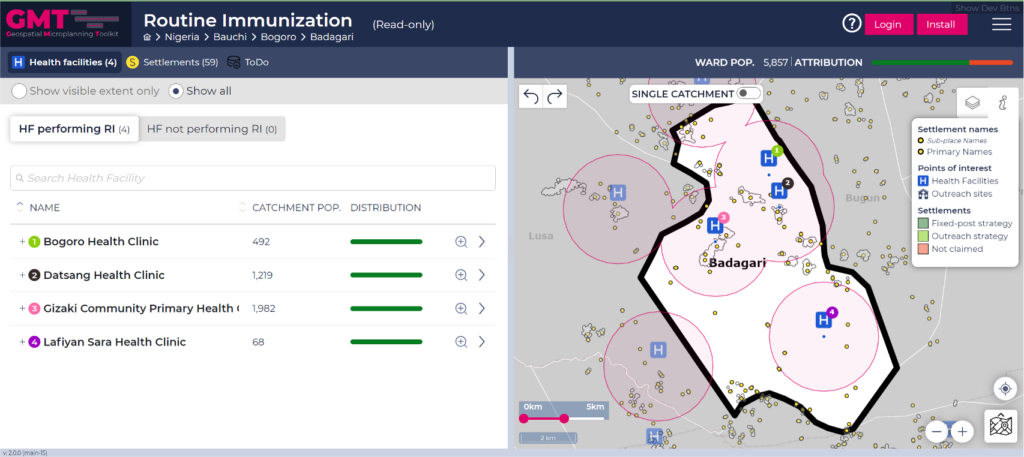

First, a definition: Artificial intelligence, or AI, can be defined as the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings. Most recently, the world has been fascinated by ChatGPT, which is a type of AI known as ‘large language learning model.’ But Pathfinder, and organizations like it, have used various types of AI for years, from Chatbots to GIS Mapping. Our programs are already integrating AI in numerous ways to support implementation of our work and increase program impact. But as AI continues to expand, so do the questions we need to ask to use it wisely.

Question: What should NGOs like Pathfinder be considering when they want to start using AI in their work?

Virginia Blaser, Member, President’s Council

NGOs like Pathfinder should consider several aspects before implementing AI in their work. This includes identifying the specific problems they aim to address with AI technology and then setting clear objectives.

Additionally, NGOs should leverage publicly available algorithms from shared libraries like the Digital Public Goods Alliance, which aligns with the UN’s Sustainable Development Goals. Even when using credible sources, NGOs should be cautious about potential biases in data sets when using openly shared algorithms and data.

Darlene Irby, Executive Director, Digital Innovation

For Pathfinder, AI can have several benefits across the entire organization. More and more organizations such as Pathfinder are turning to AI/ML to increase their competitive advantage.

The increased volume and types of data support the use of AI/ML to produce valuable insights for organizations allowing them to more quickly pivot and make resource and programmatic adjustments. For example, with AI, we can go beyond learning what happened and why, to discovering insights about the future.

AI can provide synthesized information, for example through large language models, from which ChatGPT and others (BARD, etc.) are built, can draw on data from a large variety of sources, making analytics and predictions about SRHR treatment protocols more intuitive. In our work, I can be used to predict trends in SRHR, identifying high-risk populations and can be used to design targeted interventions that meet the specific needs of communities.

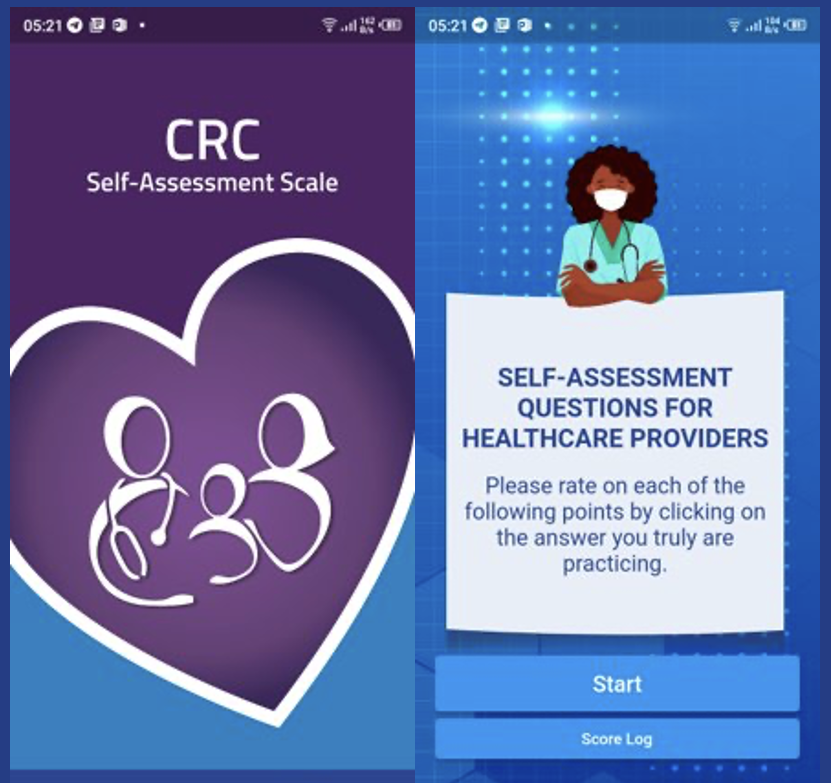

Much of our work occurs in underserved areas where there is limited access to healthcare workers. AI can be used in learning and eLearning platforms to engage in interactive and engaging learning experiences that can be adapted to individual needs AI-driven chatbots and virtual assistants can also provide information and support in areas where access to healthcare professionals is limited. We see an uptake in AI powered tools to address the continuity of care during humanitarian crises; for example, using AI chatbots and digital solutions to map and predict droughts, crop yields, and weather patterns.

Question: What are some of the easy and hard aspects of leveraging AI power for NGOs?

Matt Saaks, Senior Digital Health Advisor

Pathfinder is an endorser of the Principles for Digital Development and can benefit from working within the framework. Making use of existing AI tools, using open standards, open data, open source, and reusing and improving existing solutions puts innovative AI solutions within reach for our digital health practice. But, we face many challenges. These include:

- Data quality and availability: AI needs high-quality, accurate data to make reliable predictions. Making sure the right data is available, accessible, and in the right context, is a challenge, but one we rise to in our efforts to constantly adjust, adapt, and meet the needs of the people we serve.

- Publicly available large language models such as Bard and Chat GPT can provide broad responses to a wide range of questions. Creating AI-driven models true to our mission, values, and standards requires deliberate testing and great care. Models must reliably respond with accurate information on SRHR, free of misinformation, or bias.

- Integrating AI solutions with existing data systems will require a combination of SRHR skills and experience with machine learning, AI, data science, Python, and R (a computer language). Close collaboration between digital technology teams and family planning domain area teams will open opportunities.

- As a country-led organization, we are especially cognizant of appropriately integrating our work with local requirements, including cultural and social acceptance, regulations, and working with the reality of limited but necessary infrastructure to deploy solutions that effectively reach all of our clients.

Hal Glasser, Chief Legal Officer

The lack of clarity around ownership of content that is generated by users of external large language models and other AI platforms is a factor to consider.

Ownership concerns/claims have been voiced by the owners of data that feed the large language model algorithms without compensation or attribution (see Actors and Writers’ Guild strikes). I have also recently read that the US copyright office takes the position that material that is generated by large language model AI cannot be copyrighted. The outcomes on both these points may end up impacting how we decide to use third party sourced large language model/AI tools.

How is Pathfinder already using AI technologies?

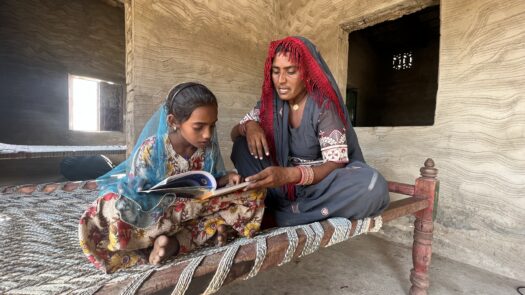

Across its programs, AI is already present in Pathfinder’s work.

At Pathfinder, we have incorporated digital technology into our programs for well over a decade through health system interventions and community solutions. We have taken advantage, where appropriate, to improve clinical decision-making; increase access to and uptake of health services; and, strengthen health systems for the delivery of reproductive health, family planning, and HIV services offered in the countries where we work.

Question: What challenges or risks should NGOs be concerned about when using AI and openly shared algorithms?

Darlene Irby, Executive Director, Digital Innovation

NGOs such as Pathfinder must work to ensure that AI models align with the needs and values of the communities that we serve, and that frameworks to support transparency, fairness, and accountability are in place.

An important consideration is having in-house expertise to understand how AI works and a data governance structure that coordinates a review process to ensure consistency and mitigate any risks.

Some additional challenges:

- Bias: AI requires training data and if that training data is biased or not representative of targeted SRHR communities/populations, AI can perpetuate inequities. AI must be linked with ethical, legal, and compliance considerations and principles.

- Digital divide: AI has the potential to exacerbate existing socioeconomic disparities. Not all populations have equal access to AI-powered SRHR services due to disparities in technological access, digital literacy, or language barriers. Ensuring inclusivity and accessibility is vital to avoid leaving low-resource communities behind.

- Quality of models: Many AI models, especially deep learning algorithms, are often viewed as “black boxes” due to their complex nature. This lack of interpretability can make it difficult to explain the reasoning behind AI-generated recommendations, raising concerns about transparency and accountability.

- Privacy and security: Handling sensitive SRHR data requires strict privacy measures. If AI systems are not properly secured, there is a risk of data breaches and unauthorized access, potentially leading to harm or misuse of personal information.

Virginia Blaser, Member, President’s Council

One significant risk is the presence of biases in data sets, which can lead to skewed or misleading results. NGOs must ensure that the algorithms and data they use are free from bias.

For health-related NGOs like Pathfinder, protecting patients’ data privacy is essential while embracing openness and data sharing. Maintaining ethical standards in data usage is crucial.

Responsible AI development should prioritize transparency, accountability, and fairness, ensuring that AI systems are aligned with human values and subject to ongoing evaluation and improvement. NGOs are well placed to be at the forefront of developing AI within those parameters.

Question: What potential trends do you foresee for AI among health-focused NGOs like Pathfinder?

Matt Saaks, Senior Digital Health Advisor

In the future, I see more personalized client experiences. We have produced chatbots, for example, for a long time: I see that area becoming more personalized, more engaging for clients.

- Improved granularity of targeted services, and allocation and reallocation of resources to reach clients.

- Improved analytics for our project managers and communications teams to make data-driven decisions and share our impact. More AI-driven tools are emerging to make data gathering and processing easier, freeing up time for analysts and managers to turn their attention to information dissemination and value-added analysis, rather than focusing on report creation.

Virginia Blaser, Member, President’s Council

In the future, AI could excel in mass personalization, especially in the health sector.

AI’s capability to analyze vast amounts of data can help NGOs like Pathfinder gain a more holistic understanding of individuals and communities. By integrating clinical data with other relevant sources like social media, AI can aid in predicting and detecting shifts in health and providing warnings to healthcare providers.

What’s next for Pathfinder? And how can we make ethical use of AI moving forward?

At Pathfinder, we set a high bar for ourselves in implementing digital technology. We must do so to stay true to our mission. As we move forward as an organization, we dedicate ourselves to:

- Sharing innovations and contributing to digital global goods

- Being transparent about our approach

- Prioritizing data privacy and informed consent

- Making our deployments targeted

- Testing before releasing

- Implementing our projects to ensure the safety and privacy of our clients

- Monitoring for bias and taking corrective actions if/when needed

- Employing safeguarding procedures, especially for those who may be vulnerable to abuse

- Using a human-centered design approach and engaging stakeholders throughout the process

- Designing for scale

- Building for sustainability

Pathfinder believes that access to quality health care is not only a fundamental human right but can also be transformative for women, families, communities, and nations in reaching their social development goals. As such, Pathfinder employs agile development and human-centered design approaches, with a strong emphasis on co-design and user testing, in leveraging digital technology and data analytics across our programs. Our solutions are specifically designed to improve transparency and quality of services and reach youth and other marginalized populations with secure and private sexual and reproductive health services.